Depth Estimation — LiDAR + RGB

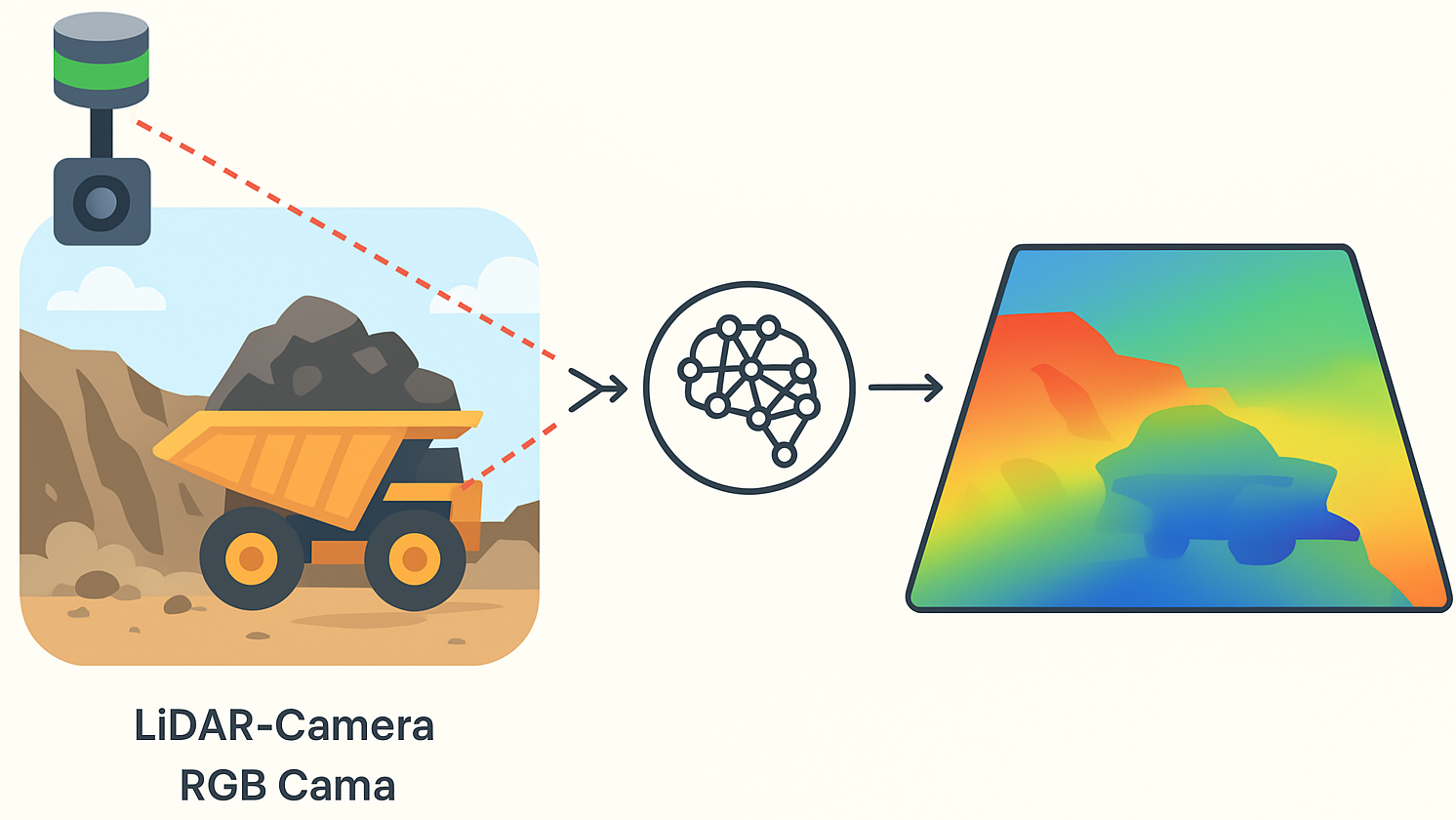

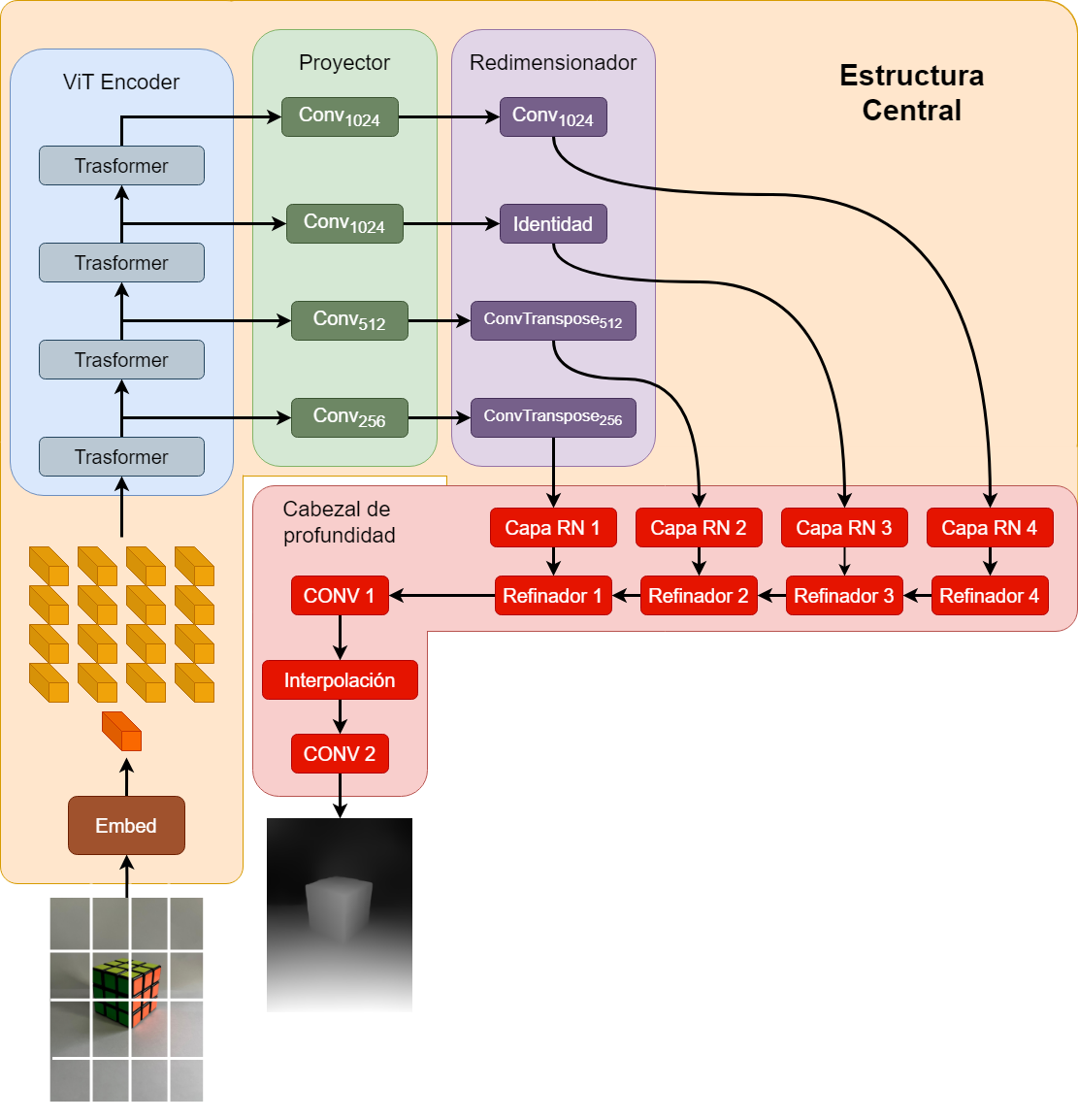

Depth estimation network adapted to fuse LiDAR points with RGB images.

Overview

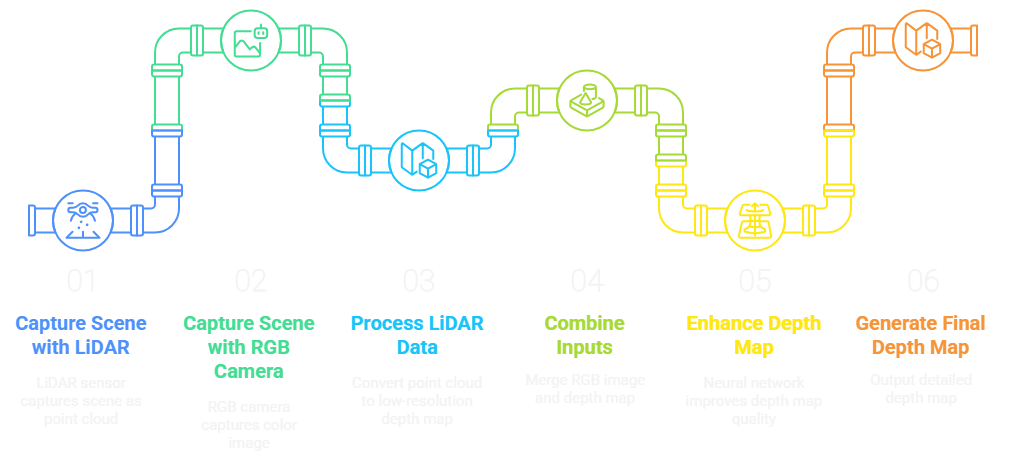

Development of a depth estimation pipeline that integrates LiDAR measurements with RGB imagery, combining geometric structure and visual context to generate dense, high-fidelity depth maps suitable for real-world environments.

Key Features

Tech Stack

- Python

High-level, interpreted, open-source programming language

- PyTorch

An open-source machine learning framework that is popular for building and training deep learning models

- OpenCV

Is probably the most versatile computer vision tool used in a broad field of computer vision tasks

- NumPy

Is a Python library used for working with arrays

- Blender

Is free, open-source software designed to create 3D content.

Challenges & Learnings

LiDAR and RGB camera calibration

Achieving accurate extrinsic calibration between the LiDAR and RGB camera was critical to ensure correct projection of 3D points onto the image plane. This required estimating precise transformation matrices, compensating for optical distortions, and handling differences in fields of view. Even minor misalignments introduced noticeable artifacts in the fused depth maps.

Handling sparsity of LiDAR when fusing to dense image representation

LiDAR data is inherently sparse, especially in distant regions or low-reflectivity surfaces. Integrating this sparse 3D information into a dense 2D image representation required spatial interpolation techniques, nearest-neighbor filtering, and multi-scale projection strategies. It was also necessary to design mechanisms that prevent the network from overfitting to dense areas while maintaining robustness in regions with very few returns.

Achieving stable predictions across different illumination conditions

The RGB camera is highly sensitive to lighting variations, shadows, underground conditions, and airborne dust common in mining environments. To ensure stable predictions, the pipeline incorporated photometric normalization, extensive data augmentation with extreme illumination scenarios, and domain-invariance strategies that reduced the model’s dependency on the specific lighting of each capture.

Training a deep network with limited resources

Training a deep model under constrained computational resources required optimizing the entire workflow. Lightweight architectures, mixed-precision training, reduced batch sizes, and gradient checkpointing were applied to prevent memory overflow. Additionally, early stopping and incremental evaluation strategies were used to achieve stable convergence without the need for high-end hardware.

Outcome

The results were highly positive, demonstrating that the proposed methodology effectively achieved its primary objective: enhancing depth estimation by fusing sparse LiDAR measurements with RGB image information. Compared to using LiDAR data alone, the fusion-based approach produced depth maps with significantly higher spatial detail, smoother surface continuity, and more complete geometry in regions where the LiDAR sensor provided few or no returns.

The experiments also confirmed an expected trend: sensors with a higher number of LiDAR channels consistently yielded better reconstruction quality, as the increased vertical resolution provided richer geometric cues for both the fusion process and the learning model.

While the methodology successfully met its goal, the project also uncovered several opportunities for future improvement—such as automating the LiDAR–camera calibration process and expanding the dataset to include non-synthetic conditions with airborne dust and other environmental disturbances commonly present in mining scenarios.

Gallery

Ready to Turn Data Into Real Impact?

Let’s work together to turn your data into insights and real results. I build reliable, data-driven solutions that help companies make smarter decisions and unlock new opportunities.